DAIMON Robotics Wants to Give Robot Hands a Sense of Touch

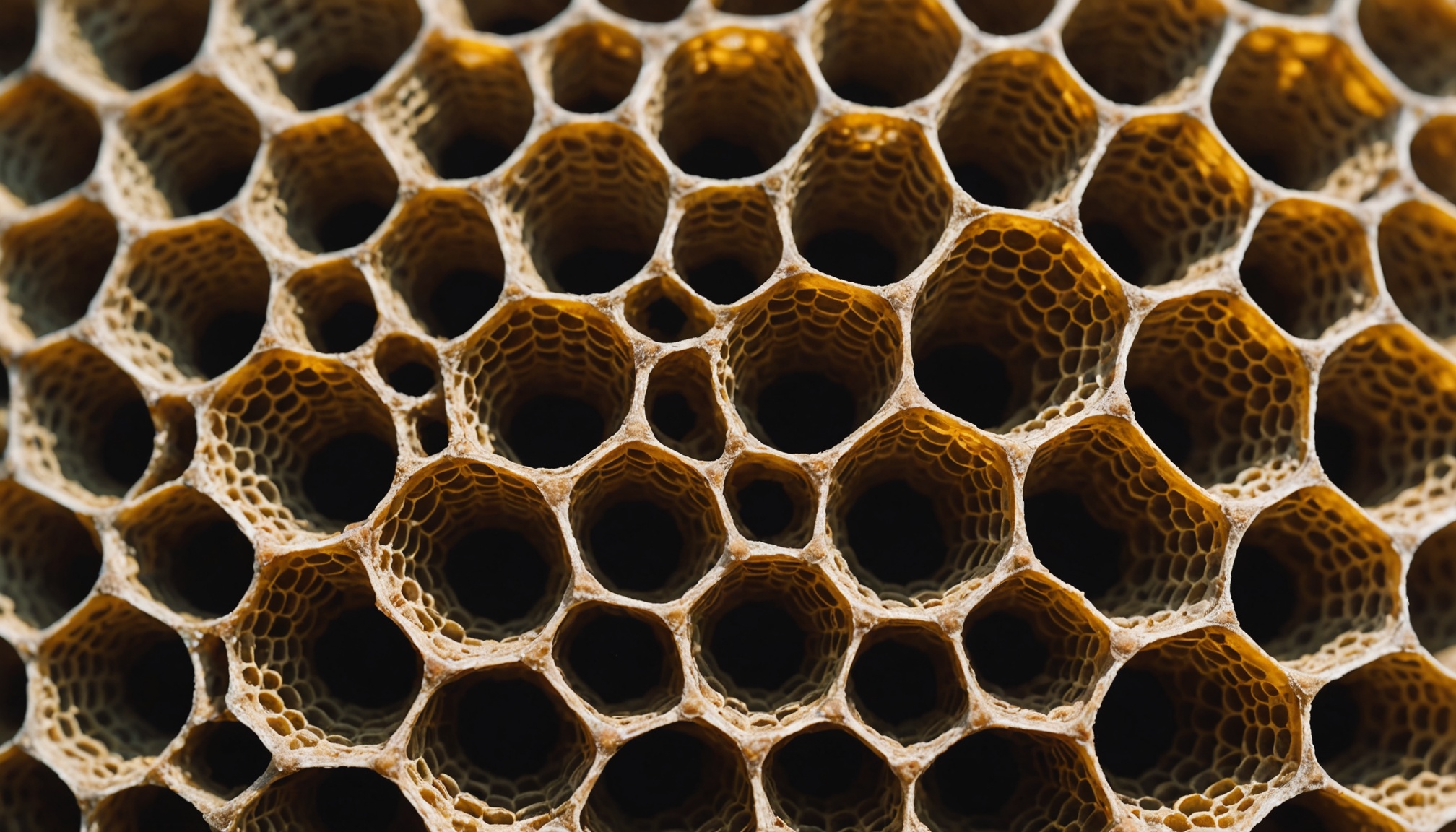

DAIMON Robotics has released Daimon-Infinity, a large-scale tactile sensing dataset designed to accelerate embodied AI development across household and industrial tasks. The dataset represents a strategic shift toward multimodal physical understanding, moving beyond vision-only training by integrating high-resolution touch feedback from over 110,000 sensing units per fingertip. Backed by Google DeepMind, Northwestern, and NUS, the initiative signals growing recognition that robot manipulation at scale requires tactile grounding. For the AI infrastructure layer, this addresses a critical gap: most foundation models lack embodied feedback loops, making real-world deployment brittle. The dataset release could reshape how teams approach sim-to-real transfer and dexterous control.

Modelwire context

Analyst takeThe more consequential detail buried in the announcement is the scale of the sensing resolution: 110,000 units per fingertip is not an incremental improvement over prior tactile datasets, it is a density that makes previous benchmarks largely incomparable. That gap means teams currently training on existing tactile corpora may find their sim-to-real pipelines need to be rebuilt from scratch to take advantage of this data, not simply retrained.

The related Modelwire archive does not offer a clean connection here. The Flock Safety surveillance story from April 30 is about computer vision and institutional access governance, a different domain entirely. Daimon-Infinity belongs to a separate thread: the race to build proprietary physical-world datasets that no one else can replicate quickly, a dynamic closer to what we have covered around foundation model data moats than to surveillance infrastructure. The Google DeepMind backing is the structural tell, it suggests this dataset is intended to anchor a broader training pipeline, not simply serve as a public research contribution.

Watch whether Google DeepMind publishes a manipulation model within the next twelve months that cites Daimon-Infinity as a primary training source. If it does, this dataset transitions from academic release to competitive infrastructure and the access terms will matter enormously.

This analysis is generated by Modelwire’s editorial layer from our archive and the summary above. It is not a substitute for the original reporting. How we write it.

MentionsDAIMON Robotics · Daimon-Infinity · Google DeepMind · Northwestern University · National University of Singapore

Modelwire Editorial

This synthesis and analysis was prepared by the Modelwire editorial team. We use advanced language models to read, ground, and connect the day’s most significant AI developments, providing original strategic context that helps practitioners and leaders stay ahead of the frontier.

Modelwire summarizes, we don’t republish. The full content lives on spectrum.ieee.org. If you’re a publisher and want a different summarization policy for your work, see our takedown page.