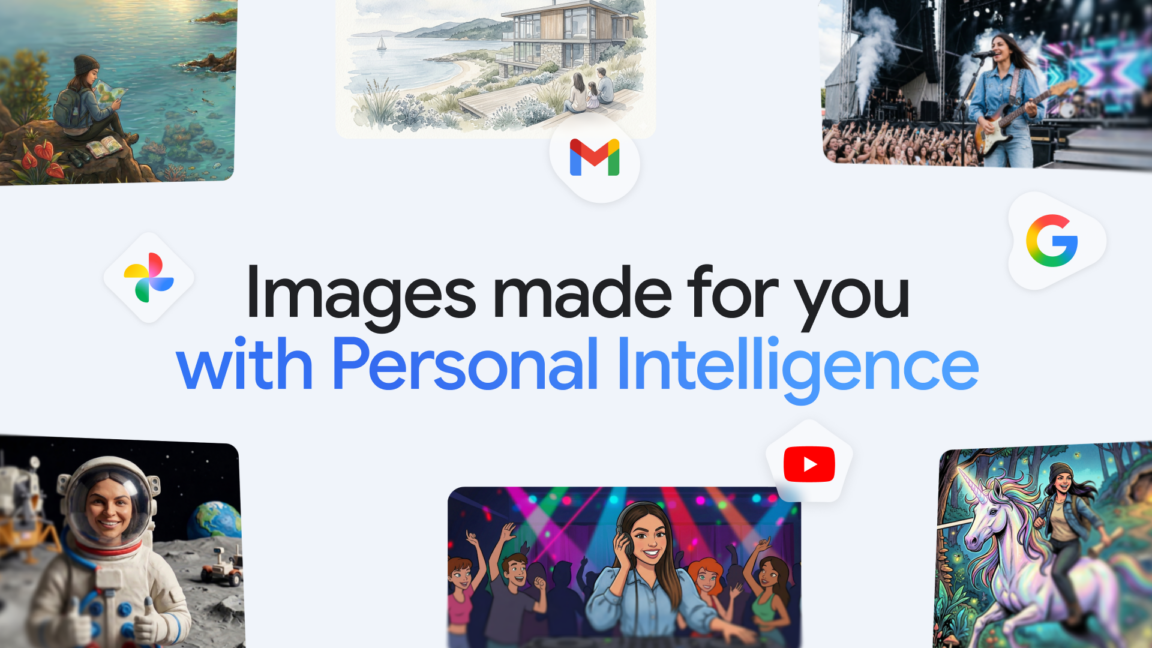

Gemini can now create personalized AI images by digging around in Google Photos

Google has integrated Gemini with Google Photos to enable personalized image generation, allowing users to reference their own photo library when creating AI images. This feature deepens Gemini's multimodal capabilities by connecting generative AI to personal user data.

Modelwire context

Skeptical readThe summary skips the model powering this feature: according to The Verge's same-day coverage, Google is using Nano Banana 2, a relatively lightweight model, which raises real questions about how sophisticated the personalization actually is versus how it's being positioned. There's also no mention of what data leaves the device, or whether Photos content is used to further train generative models.

This is the third Gemini surface expansion in roughly a week. The Verge's April 16 piece on the same feature names the underlying model and frames this as part of Google's broader 'Personal Intelligence' push. That framing matters: Google is systematically connecting Gemini to data stores users already trust (photos, browsing history via Chrome Skills from April 14), which is a coherent strategy but one that concentrates a lot of personal data under a single AI layer. The privacy implications of that consolidation are not being discussed in any of these launch posts.

Watch whether Google publishes any documentation clarifying whether Photos content used in generation is retained server-side or processed on-device. If no such disclosure appears within 30 days, that absence itself is the story.

Coverage we drew on

This analysis is generated by Modelwire’s editorial layer from our archive and the summary above. It is not a substitute for the original reporting. How we write it.

MentionsGoogle · Gemini · Google Photos

Modelwire summarizes — we don’t republish. The full article lives on arstechnica.com. If you’re a publisher and want a different summarization policy for your work, see our takedown page.