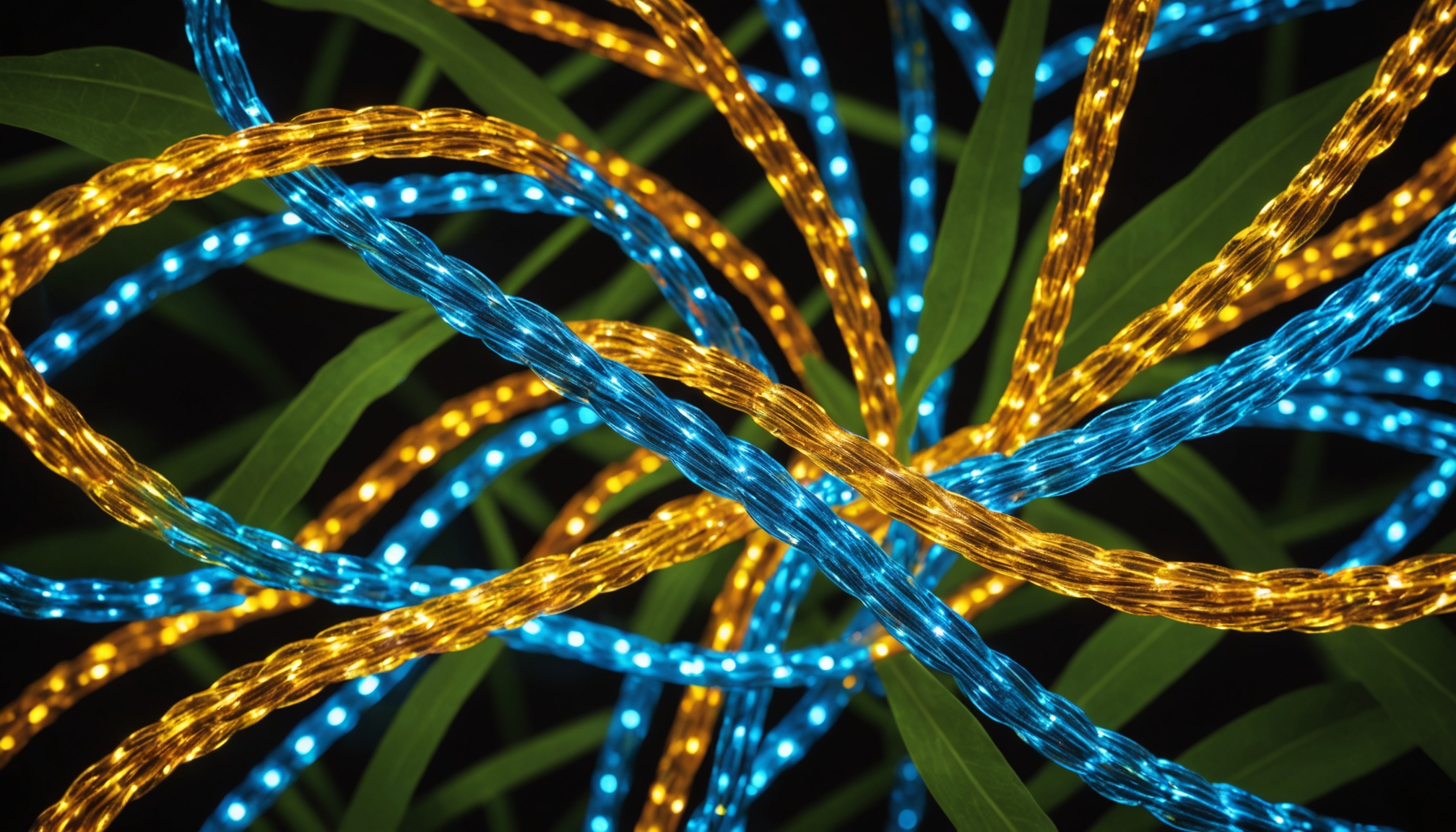

Nvidia, Corning Partner on Large-Scale AI infrastructure Buildout

Nvidia and Corning's joint optical fiber manufacturing initiative addresses a critical bottleneck in AI infrastructure scaling. As model training and inference demands accelerate, networking capacity has become as constraining as compute itself. This partnership signals that hyperscalers view fiber optics as essential to sustaining the next wave of large-scale model deployment, shifting supply-chain strategy from chip-centric to connectivity-centric. The move reflects industry recognition that data movement, not just processing power, now limits AI infrastructure expansion.

Modelwire context

Analyst takeCorning is primarily a materials and manufacturing company, not a traditional tech vendor, and its entry into the AI infrastructure conversation signals that the supply-chain stress is now reaching industrial suppliers well outside the semiconductor stack. The partnership effectively makes fiber optic capacity a named line item in AI procurement strategy, which is a different kind of commitment than simply ordering more cable.

This fits directly alongside the May 1st reporting that 'Big tech's AI spending balloons to $725 billion this year,' which framed infrastructure depth as the primary competitive lever. That story focused on compute and chip procurement; the Nvidia-Corning deal extends the same logic to physical connectivity. It also reinforces the earlier piece 'AI Demand Is Outpacing the Scaffolding to Support It,' which identified operational infrastructure as the binding constraint on deployment at scale. Together, these stories sketch a consistent picture: capital is now flowing into every layer of the stack, not just GPUs.

Watch whether other hyperscalers (Microsoft, Google, Amazon) announce comparable fiber or optical interconnect partnerships within the next two quarters. If they do, it confirms that networking capacity has become a procurement priority on par with compute; if Nvidia-Corning remains an outlier, this may reflect Nvidia-specific data center architecture choices rather than an industry-wide shift.

Coverage we drew on

This analysis is generated by Modelwire’s editorial layer from our archive and the summary above. It is not a substitute for the original reporting. How we write it.

Modelwire Editorial

This synthesis and analysis was prepared by the Modelwire editorial team. We use advanced language models to read, ground, and connect the day’s most significant AI developments, providing original strategic context that helps practitioners and leaders stay ahead of the frontier.

Modelwire summarizes, we don’t republish. The full content lives on aibusiness.com. If you’re a publisher and want a different summarization policy for your work, see our takedown page.