'The Biggest Student Data Privacy Disaster in History': Canvas Hack Shows the Danger of Centralized EdTech

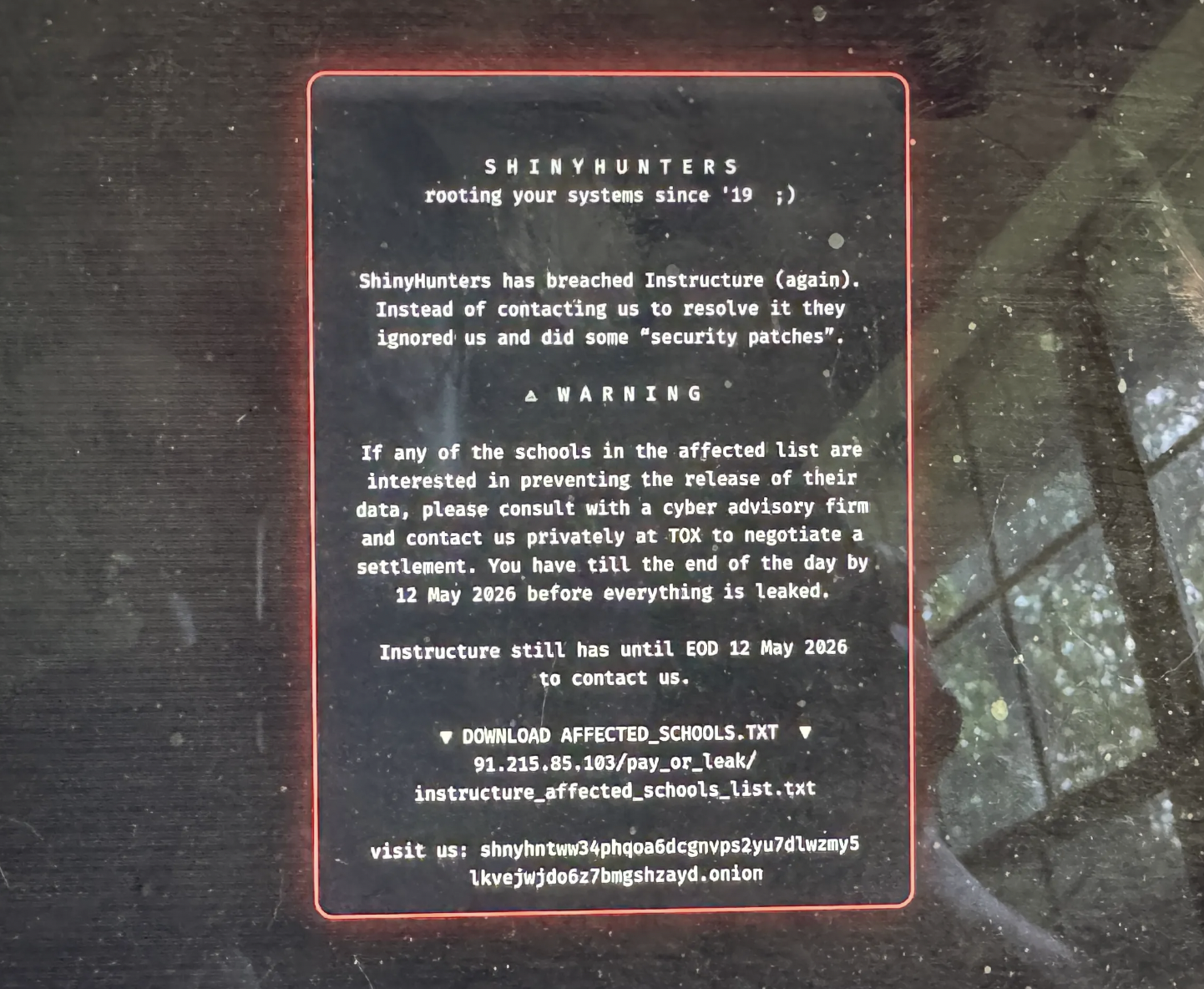

A breach of Canvas, a widely deployed learning management system, exposed sensitive student records including medical details, accessibility needs, and reports of sexual assault. The incident underscores a critical vulnerability in the AI and edtech infrastructure stack: centralized platforms aggregating high-risk personal data create single points of failure with cascading harm across entire institutions. For AI practitioners building on LLM-powered educational tools, this breach signals that data governance and access controls must precede feature velocity, and that federated or privacy-preserving architectures may become table stakes in regulated sectors.

Modelwire context

Analyst takeThe breach isn't just a security incident, it's a stress test of the entire centralized LMS model that most K-12 and higher education institutions have quietly standardized on. Canvas's near-monopoly position in the sector means the blast radius of any single failure is institutional, not individual.

This connects directly to the arXiv case study from early May on RAG chatbots in medical settings, which found that governance rigor consistently lags behind deployment speed in regulated domains. The Canvas breach is the production-scale version of that finding: sensitive data (medical records, assault reports, accessibility needs) was aggregated into a platform whose security posture wasn't commensurate with the risk profile of what it held. The broader infrastructure pressure covered in 'AI Demand Is Outpacing the Scaffolding to Support It' (AI Business, May 1) adds context: institutions are adopting AI-adjacent edtech faster than their data governance frameworks can absorb. The pattern across all three stories is the same, capability deployment outrunning the controls that regulated environments actually require.

Watch whether Canvas's parent company Instructure faces formal regulatory action under FERPA within the next 90 days. A federal enforcement move would accelerate procurement reviews at competing institutions and give federated LMS alternatives a concrete opening they currently lack.

Coverage we drew on

This analysis is generated by Modelwire’s editorial layer from our archive and the summary above. It is not a substitute for the original reporting. How we write it.

Modelwire Editorial

This synthesis and analysis was prepared by the Modelwire editorial team. We use advanced language models to read, ground, and connect the day’s most significant AI developments, providing original strategic context that helps practitioners and leaders stay ahead of the frontier.

Modelwire summarizes, we don’t republish. The full content lives on 404media.co. If you’re a publisher and want a different summarization policy for your work, see our takedown page.