Widening the Gap: Exploiting LLM Quantization via Outlier Injection

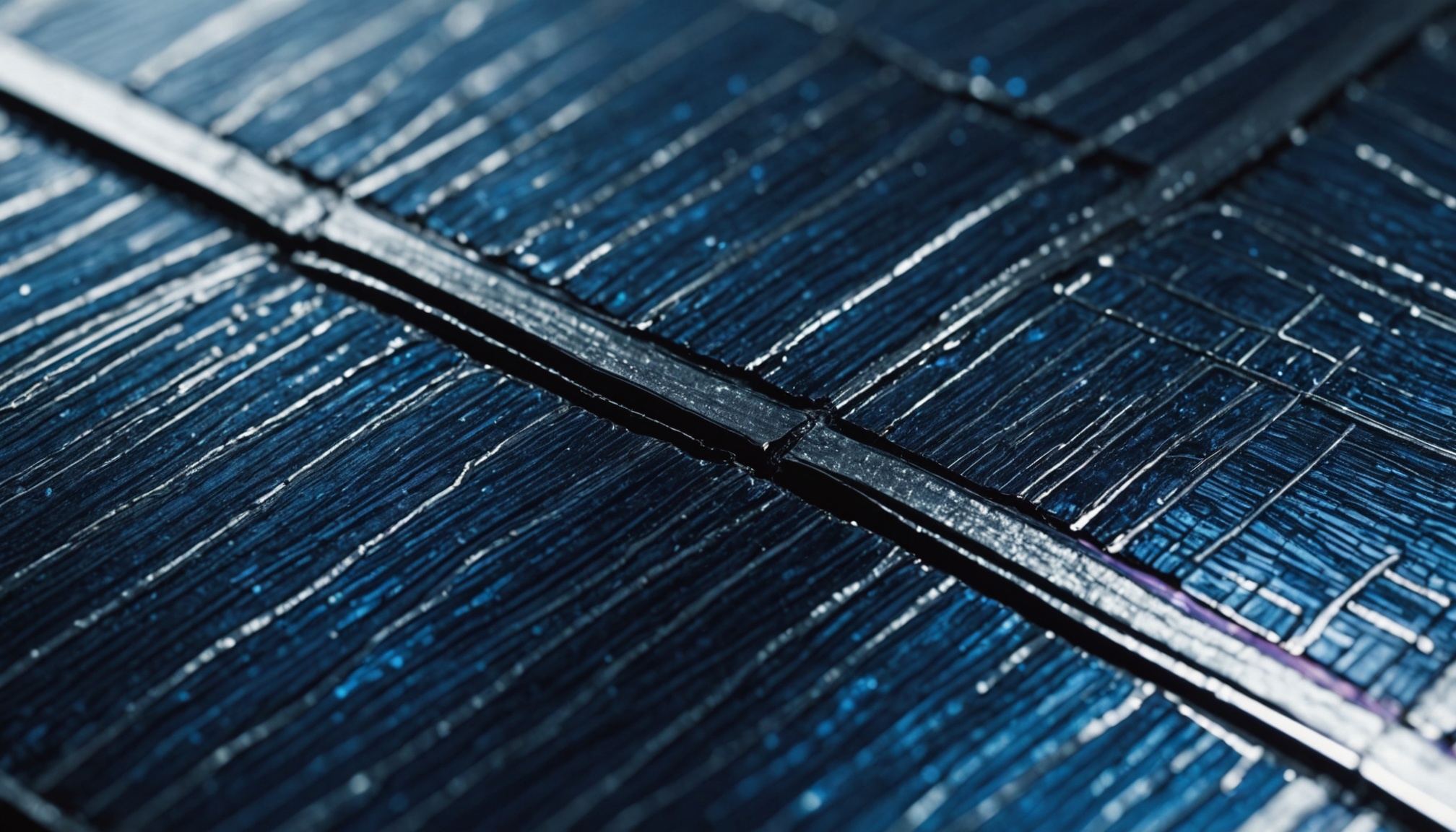

Researchers have demonstrated the first practical attack that reliably poisons large language models during quantization, a critical deployment step that compresses models for edge devices. Unlike prior work limited to simple quantization schemes, this approach exploits vulnerabilities in advanced quantization methods through outlier injection, enabling dormant malicious behavior to activate only after users compress the model. The finding exposes a supply-chain risk in the quantization pipeline: adversaries can distribute seemingly safe full-precision models that turn hostile once optimized, threatening the security assumptions underlying efficient LLM deployment at scale.

Modelwire context

ExplainerThe critical detail the summary gestures at but doesn't fully land: the attack is specifically designed to survive the quantization process intact, meaning the malicious behavior is calibrated to the numerical distortions quantization introduces, not just hidden from pre-deployment evaluation. The adversary isn't just hiding a backdoor, they're engineering it to activate because of compression, not despite it.

This sits in a growing cluster of model-poisoning research on the site. The MetaBackdoor paper from the same day showed that architectural components like positional encoding can become attack surfaces that bypass content-based defenses. Outlier injection follows the same logic: find a transformation the model must undergo in production and weaponize it. Together, these papers suggest the threat model for LLM security needs to account for the entire deployment pipeline, not just training data and inference inputs. The governance paper from May 14, 'Behavioural Assurance Cannot Verify the Safety Claims Governance Now Demands,' is directly relevant here: if behavioral audits can't catch hidden objectives, they almost certainly can't catch a backdoor that only activates post-compression.

Watch whether major model hubs like Hugging Face introduce quantization-aware integrity checks or provenance attestation within the next six months. If they don't respond with tooling, this attack class will remain practically undetectable at the distribution layer.

Coverage we drew on

This analysis is generated by Modelwire’s editorial layer from our archive and the summary above. It is not a substitute for the original reporting. How we write it.

MentionsLLM · Quantization · Outlier Injection

Modelwire Editorial

This synthesis and analysis was prepared by the Modelwire editorial team. We use advanced language models to read, ground, and connect the day’s most significant AI developments, providing original strategic context that helps practitioners and leaders stay ahead of the frontier.

Modelwire summarizes, we don’t republish. The full content lives on arxiv.org. If you’re a publisher and want a different summarization policy for your work, see our takedown page.